Event operations teams don’t hate technology; they push back when technology takes decisions out of their hands without making responsibility disappear. When something goes wrong during a live event, the accountability still sits with the ops team, even if the decision was made by a system they cannot see into, pause, or override.

That distinction makes a huge difference, especially now, when events are no longer treated as discretionary marketing line items. They’re under sharper scrutiny from finance, procurement, and leadership, with ROI, risk, and operational discipline firmly in the spotlight

So when AI assistants enter the conversation, the resistance you hear from ops teams seems… practical.

They’re the ones accountable when registration breaks, when agendas change at the last minute, when access issues escalate on the floor, or when something goes wrong during a live moment that cannot be replayed. Now, handing parts of that responsibility to an AI assistant means trusting it to behave predictably under pressure, escalate at the right time, and never act beyond the authority the team has explicitly given it.

PS: This blog isn’t about why AI is ‘inevitable’ for events. It’s about how to introduce AI without breaking trust, and what human-in-the-loop actually means inside real event operations.

Why event ops teams are sceptical of AI (and why that scepticism is rational)

Live events are unforgiving environments where there is no sandbox once the doors open and no quiet rollback when something goes wrong. Issues surface immediately, often in front of attendees, sponsors, speakers, and senior leadership, leaving operations teams to resolve problems in real time rather than in the next sprint or release cycle.

That alone makes ops teams cautious about anything that behaves unpredictably.

But there’s more history behind the hesitation.

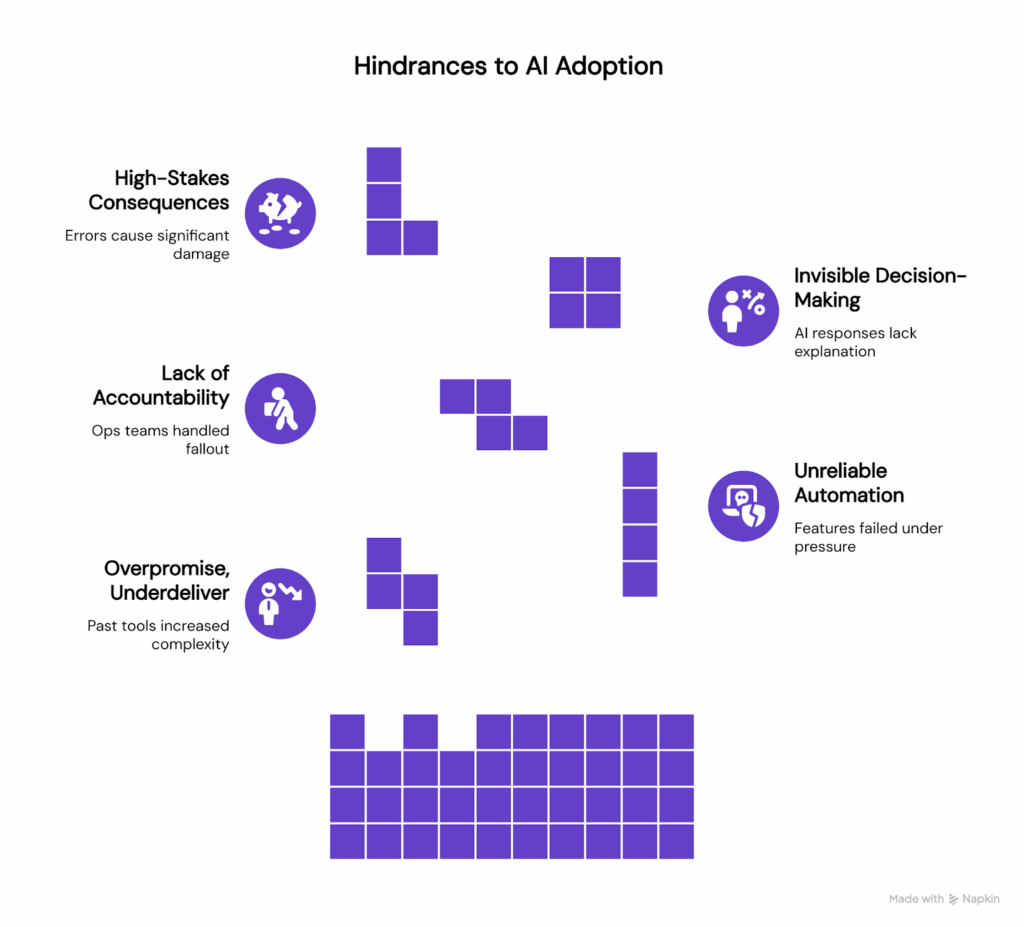

The legacy problem: overpromise, underdeliver

Event technology has spent years promising simplification while quietly delivering more complexity.

For many operations teams, past tools didn’t remove work so much as redistribute it. Systems that claimed to automate coordination introduced new dashboards to monitor. “Intelligent” features worked well in controlled demos but became unreliable under live pressure. Instead of reducing operational load, they demanded constant supervision during the very moments teams needed focus the most.

Over time, this created a pattern. New tools were treated with caution, not because teams resisted innovation, but because they had learned the cost of misplaced trust. When something broke, the accountability never sat with the software. It sat with the operations team managing the fallout.

And then AI enters this environment carrying that history.

When ops teams hear “AI assistant,” what they often imagine is not speed or efficiency, but another system that might respond confidently without explaining why. A system that appears helpful until conditions change, and then behaves unpredictably when stakes are highest. That fear becomes sharper when decision-making is invisible.

Operations teams don’t just need answers to be correct. They need to understand how those answers were arrived at. In live environments, traceability is not a nice-to-have. It is the difference between resolving an issue quickly and compounding it.

If an assistant gives an incorrect response about access, agenda changes, or passes, the risk is not just misinformation. It can mean misdirected attendees, overcrowding, security concerns, or reputational damage in front of sponsors and speakers. Without visibility into what rule was applied, what source was used, or why a response was chosen, teams are left reacting rather than controlling the situation.

This is why scepticism surfaces first in event operations teams, long before it reaches marketing or strategy. Ops teams sit closest to risk. They experience the immediate consequences of system behaviour, and they are expected to recover in real time when things go wrong.

For AI to earn trust in this environment, it has to do more than work. It has to be explainable, predictable, and controllable under pressure.

How is AI being added to event ops today?

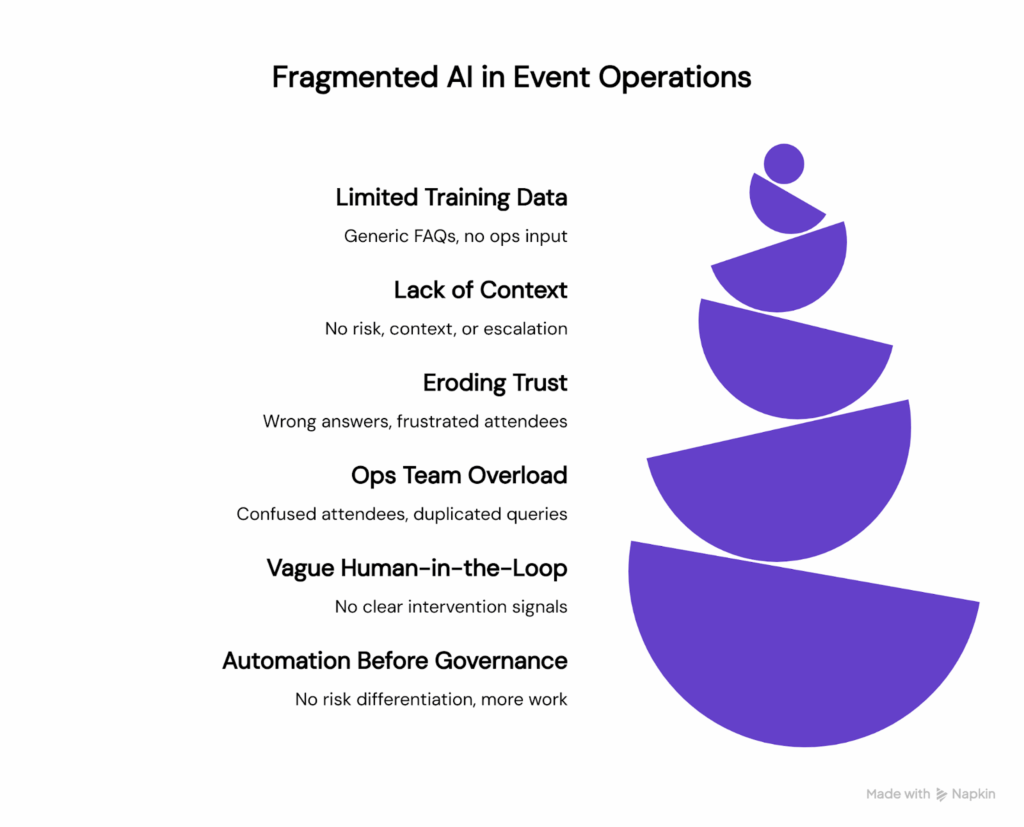

In most organisations, AI enters event operations in a fragmented way.

A chatbot is added to the event website a few weeks before launch. It is trained on a handful of generic FAQs, usually pulled from public-facing pages. There is little involvement from the operations team beyond a quick review, because the assumption is that the assistant will “just handle basic questions.”

During the event, an attendee asks a simple question: Where do I find the agenda for day two? The AI responds correctly and builds confidence.

But then… the questions change.

Another attendee asks about upgrading their pass. A speaker asks about a last-minute session change. Someone flags an access issue at the venue. AI tries to respond anyway, pulling from outdated information or generic policies, because it has no concept of risk, context, or escalation.

From the attendee’s perspective, this is frustrating. Answers feel confident and correct, but really, are just wrong. They are sent to the wrong pages or given outdated instructions. Trust erodes quickly, and they end up contacting support anyway.

From the ops team’s perspective, it’s a lot worse.

They are suddenly dealing with confused attendees, duplicated queries, and issues they did not even know the AI was responding to. There is no clear record of what was said, which source was used, or why the response was given. The assistant exists alongside operations, not inside their workflow.

This separation is where trust breaks.

The same pattern shows up in how “human-in-the-loop” is implemented. On paper, there is a manual override. In reality, no one knows where it lives or when it should be used. When a problem surfaces during a live moment, the ops team has no clear signal that intervention is required, no context from the prior interaction, and no fast way to regain control.

Live events do not allow for vague control models. If a human needs to step in, they need to know when, how, and with what information. Without that clarity, human-in-the-loop becomes a comforting phrase rather than a usable operating model.

The final breakdown happens when automation is introduced before governance.

The AI treats all questions the same. Low-risk queries like shuttle timings are handled with the same confidence as high-risk ones like access changes or speaker updates. There are no boundaries, no escalation rules, and no differentiation between what can safely be automated and what requires human judgment.

Ops teams see this immediately. Systems that cannot distinguish between low and high risk create more work, not less. They generate clean demos and messy live outcomes.

This is why many AI initiatives in event operations stall or get quietly switched off. Not because AI lacks capability, but because it is introduced out of sequence, without the operational foundation required for trust.

What human-in-the-loop actually means in event operations

Human-in-the-loop does not mean humans approving every response. That would slow teams down and defeat the purpose of using AI in the first place.

In event operations, human-in-the-loop starts much earlier.

It means humans are involved before the AI is ever switched on. Ops teams define the scope, rules, escalation logic, and acceptable behaviour upfront, so the system operates on a foundation that has already been human-approved. Once live, humans intervene by exception, not by default.

This is what makes human-in-the-loop practical at scale.

Trustworthy AI in event operations rests on four non-negotiable principles, all decided by humans from the start.

-

Clear boundaries

AI assistants must operate within an explicitly defined scope:

- By event phase (pre-event, live, post-event)

- By query type (logistics vs exceptions)

- By risk level

When AI knows exactly where it is allowed to act, ops teams stop worrying about surprises.

Here’s an example:

An assistant can answer agenda timings and venue directions during live days, but automatically escalates any access, pass change, or speaker-related query to a human.

-

Predictable escalation paths

When AI reaches a limit, escalation should be automatic and structured:

- The right human is notified

- The full context of the conversation is passed along

- No conversation disappears into a void

This mirrors how ops teams already work under pressure.

Here’s an example:

If an attendee asks a question the assistant cannot answer confidently, the query is routed to the ops inbox with the full chat history, rather than returning a generic fallback response.

-

Decision visibility

Ops teams need to be able to see how decisions are made:

- What source was referenced

- What guideline was applied

- Why a specific response was chosen

Visibility builds confidence far more effectively than performance metrics alone.

Here’s an example:

An ops lead can review why the assistant linked to a specific agenda page, including which approved document was used to generate the response.

-

Immediate human intervention

Humans must be able to:

- Pause responses

- Override behaviour

- Adjust rules mid-event

Control should be accessible, not buried in admin settings no one can find during a live issue.

Here’s an example:

During a last-minute agenda change, the ops team temporarily pauses the assistant’s responses until updated information is reviewed and approved.

In essence, ‘human-in-the-loop’ is when humans define the system, and then AI operates within those decisions, and intervention is always possible when reality shifts.

From automation to agentic assistants

Most event technology automation has failed for the same reason: it tried to replace human judgment instead of supporting it.

Agentic assistants work differently.

Rather than executing fixed workflows blindly, an agentic assistant operates as a controlled participant inside event operations. It understands where it is allowed to act, when it must escalate, and when it should step aside entirely.

In practical terms, an agentic assistant:

- Acts within clearly defined operational rules

- Escalates instead of improvising when confidence is low

- Adapts to changing conditions without overstepping authority

This design matters because event operations are not static. Live environments change by the minute, and teams are increasingly expected to do more with fewer resources while meeting higher standards of governance, risk management, and accountability in 2026.

When designed properly, agentic AI does not make decisions for operations teams. It takes on the predictable, high-volume work so humans can apply judgment where it matters most.

That shift is what unlocks real operational value.

Here’s an example of human-led AI design (in practice)

It’s easier to understand human-led AI when you see how it shows up in everyday work, rather than as a set of abstract principles.

Here’s what that looks like in practice, and why it matters to the people running awards programmes day to day.

-

Ops teams define the scope before anything goes live

Before an assistant is ever switched on, the team running the programme decides what it should and should not handle. That includes which questions it can answer confidently, where it needs to escalate, and what level of certainty is required before it responds.

The benefit here is control. Teams are not reacting to unexpected behaviour or explaining away incorrect answers later. They know, from the start, where the assistant is helpful and where a human should step in. That reduces risk and avoids the uncomfortable moments where an entrant receives guidance that feels off or misleading.

In day-to-day terms, it means fewer surprises and far less time spent firefighting.

-

Context comes from the way teams already work

Instead of pulling information from the open internet, the assistant is grounded in material teams already trust. Internal runbooks, event-specific documentation, approved FAQs, and official guidelines form the knowledge base.

This matters because it keeps answers consistent with how the programme is actually run. Entrants receive guidance that reflects real rules and real expectations, not generic interpretations. Internally, teams do not need to correct or override the assistant because it is already aligned with their processes.

On a practical level, this reduces back-and-forth, prevents contradictory advice, and protects the integrity of the programme.

-

A live control layer keeps the assistant visible

Rather than setting things up once and hoping for the best, teams work with a live control layer. This allows them to adjust scope as the entry period evolves, monitor how questions are being handled, and intervene immediately if something changes.

The benefit here is confidence. The assistant does not disappear into the background as a black box. Teams can see what is happening and step in when needed.

In daily operations, this means changes in categories, criteria, or deadlines can be reflected quickly without confusion, and support remains aligned even as the programme moves.

-

Clear fallback rules protect trust

Perhaps the most important principle is knowing when to stop. When confidence drops or a question becomes ambiguous, the assistant is designed to escalate rather than guess.

That restraint builds trust over time. Entrants feel supported rather than misled, and teams know that edge cases are being handled with care rather than forced automation.

In practice, this reduces the risk of incorrect guidance while reassuring entrants that there is always a human safety net behind the experience.

So, what does this unlock for event operations teams?

When AI is introduced this way, the benefits stop being theoretical. They show up in how teams work day to day, especially during live periods.

1. Lower cognitive load during live events

Repetitive, low-risk attendee questions are handled without constant interruption. Operations teams spend less time firefighting and more time managing exceptions that genuinely need human judgment.

This is exactly what Terrapinn saw across its global portfolio. By deploying AI assistants with clear boundaries and full editorial control, their ops teams were able to offload thousands of routine queries without losing visibility or oversight. Over 4,000 attendee questions were answered instantly across digital channels, freeing teams to focus on higher-value operational work.

2. Faster responses without sacrificing control

Speed improves where it is safe to do so, while humans stay in control where stakes are high. AI handles the predictable. Ops teams handle the critical.

For Terrapinn, this translated into a 99 percent successful response rate and meaningful cost savings, without relying on developers or increasing headcount. Crucially, fallback rules ensured that when the AI could not answer confidently, it stepped aside rather than guessing.

3. Consistency across large event portfolios

Clear rules scale better than manual effort. Once boundaries, tone, and escalation logic are defined, they can be applied consistently across dozens of events while still allowing for event-specific nuance.

Terrapinn launched tailored AI concierges across email, websites, apps, and WhatsApp from a single control layer, saving up to 80 percent of operational time on digital channels and maintaining a consistent experience across events.

4. A healthier relationship with AI internally

When teams can see how AI behaves, intervene when needed, and measure impact clearly, AI stops feeling experimental. It becomes dependable infrastructure.

In Terrapinn’s case, what began as a high-pressure operational requirement is now being explored as a repeatable, scalable part of their workflow, because the ops team trusts it.

Want to see how this works in practice?

You can explore the full Terrapinn case study, including metrics, setup, and operational design, in the detailed case study.

👉 Download the Terrapinn case study

FAQs on how to introduce AI to event ops without losing team trust

Q. What does human-in-the-loop AI actually mean for event operations?

In event operations, human-in-the-loop means humans define where AI can act, when it must escalate, and how decisions can be overridden, especially during live moments. It is not about approving every response manually. It is about maintaining control, visibility, and accountability while allowing AI to handle low-risk, repetitive tasks.

Q. Why are event operations teams often sceptical of AI assistants?

Ops teams are responsible for live outcomes where errors are immediately visible. Many have experienced event technology that overpromised automation but added operational risk instead. The scepticism usually comes from concerns around loss of oversight, unclear escalation paths, and AI systems making decisions without transparency during high-pressure situations.

Q. Is AI safe to use during live events?

AI can be safe during live events if it is constrained by clear rules. Low-risk tasks like answering logistics questions or surfacing known information can be handled by AI, while high-risk scenarios should always escalate to humans. The issue is not AI itself, but deploying it without boundaries or intervention controls.

Q. What kinds of event operations tasks should AI handle?

AI is best suited for:

- High-volume, repetitive attendee queries

- Information retrieval from approved internal documents

- Pattern detection across attendee questions or operational signals

Tasks involving exceptions, access changes, security, or judgment calls should always involve human decision-making.

Q. How do operations teams retain control once AI is deployed?

Control comes from:

- Defining scope and rules before deployment

- Having visibility into how decisions are made

- Being able to pause, override, or adjust AI behaviour at any point

If humans cannot intervene easily during a live event, trust breaks quickly.

Q. What’s the difference between automation and agentic AI in event ops?

Traditional automation follows fixed workflows and breaks when conditions change. Agentic AI operates within defined rules but can adapt and escalate when uncertainty increases. For event operations, this matters because live environments are fluid and require systems that know when not to act.

Q. How does AI help event ops teams without replacing them?

AI reduces cognitive load by handling predictable tasks and surfacing useful signals, allowing ops teams to focus on judgment-heavy decisions. It supports human work rather than replacing it, especially as teams are expected to do more with fewer resources in 2026.

Q. How should event organisations introduce AI without losing team trust?

AI should be introduced with operations teams, not to them. Trust builds when teams are involved early, understand the boundaries, and retain control during live moments.

In practice, that means starting with low-risk use cases, making decision logic visible, defining clear escalation paths, and treating AI as part of operational infrastructure rather than an open-ended experiment. Teams need to see how AI behaves under real conditions before relying on it.

This is why a phased rollout matters. Approaches like our 3P+ model (Plan, Play, Prove) help organisations move from exploration to confidence by first aligning on operational goals, then testing in controlled environments, and only scaling what proves reliable in practice. Trust grows when behaviour is predictable, measurable, and repeatable over time.